Progressive Disclosure Matters: Applying 90s UX Wisdom to 2026 AI Agents

Progressive disclosure stops decision paralysis. It focuses user attention on immediate intent — a strategy Gemini CLI has now adopted via Agent Skills.

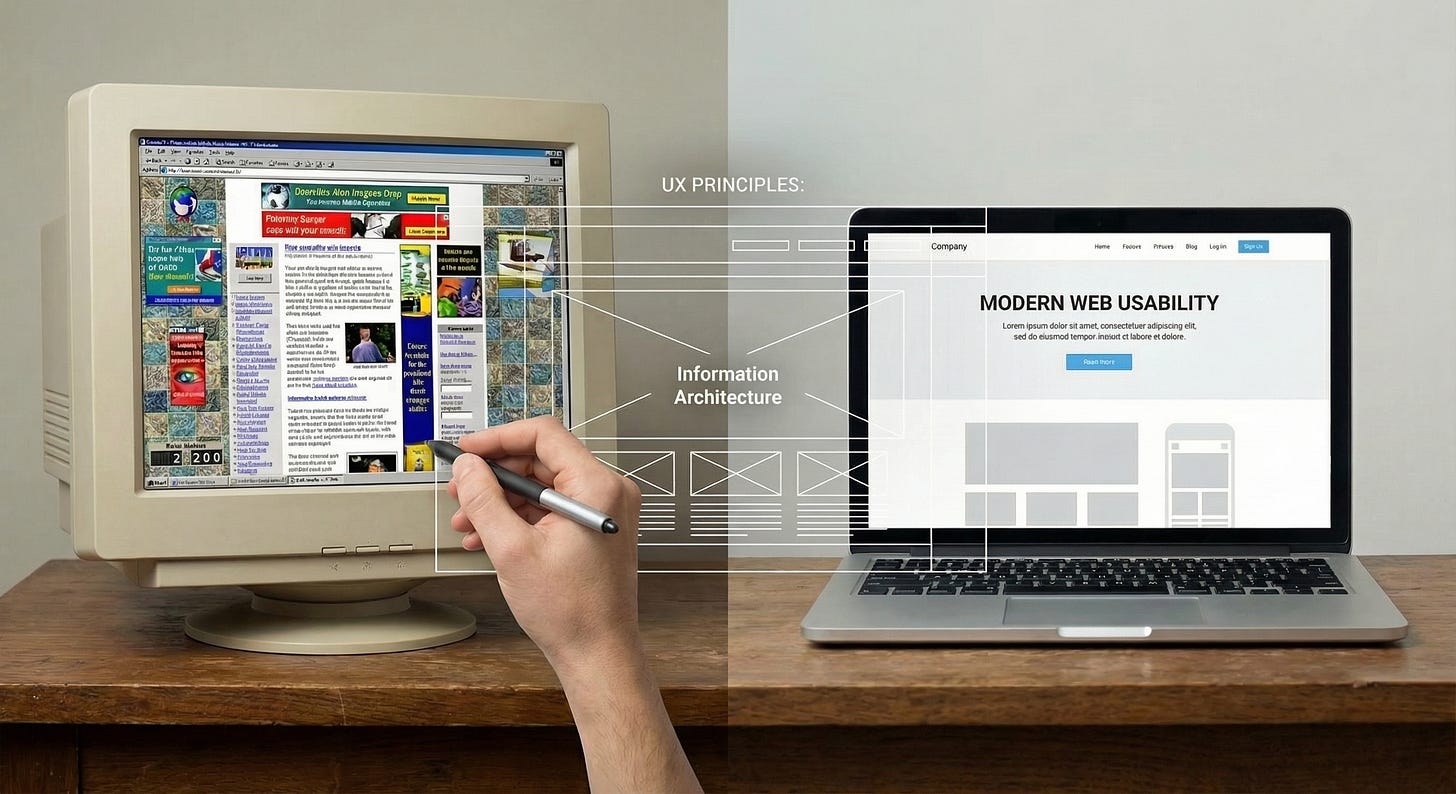

When I first started building web applications in the late-2000s, I was fortunate to be mentored by colleagues who guided me to study UX principles. It was an era defined by a few giants whose principles shaped how we build usable interfaces.

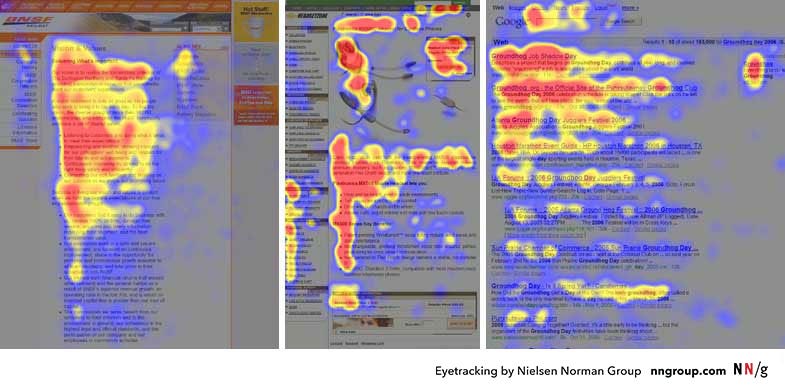

Jakob Nielsen taught us about the “F-Shaped Pattern” of reading and railed against “undifferentiated content blobs.”

Steve Krug (Don’t Make Me Think, 2000) hammered home the idea that every millisecond of cognitive friction loses users.

Luke Wroblewski (Web Form Design, 2008) showed us that the difference between a conversion and a bounce was often just hiding unnecessary fields until the user actually needed them.

But the most enduring lesson from that era, one championed by Nielsen in 1995 and refined through the 2000s, was Progressive Disclosure - the interaction design pattern of showing users only the information they need right now, deferring advanced features until requested to reduce cognitive load.

In human-computer interaction, progressive disclosure prevents decision paralysis. If you show a user 50 settings on one screen, they freeze. If you show them 3 critical settings and an “Advanced” toggle, they succeed. It respects the user’s attention span and aligns the interface with their immediate intent.

Applying This to Context Engineering

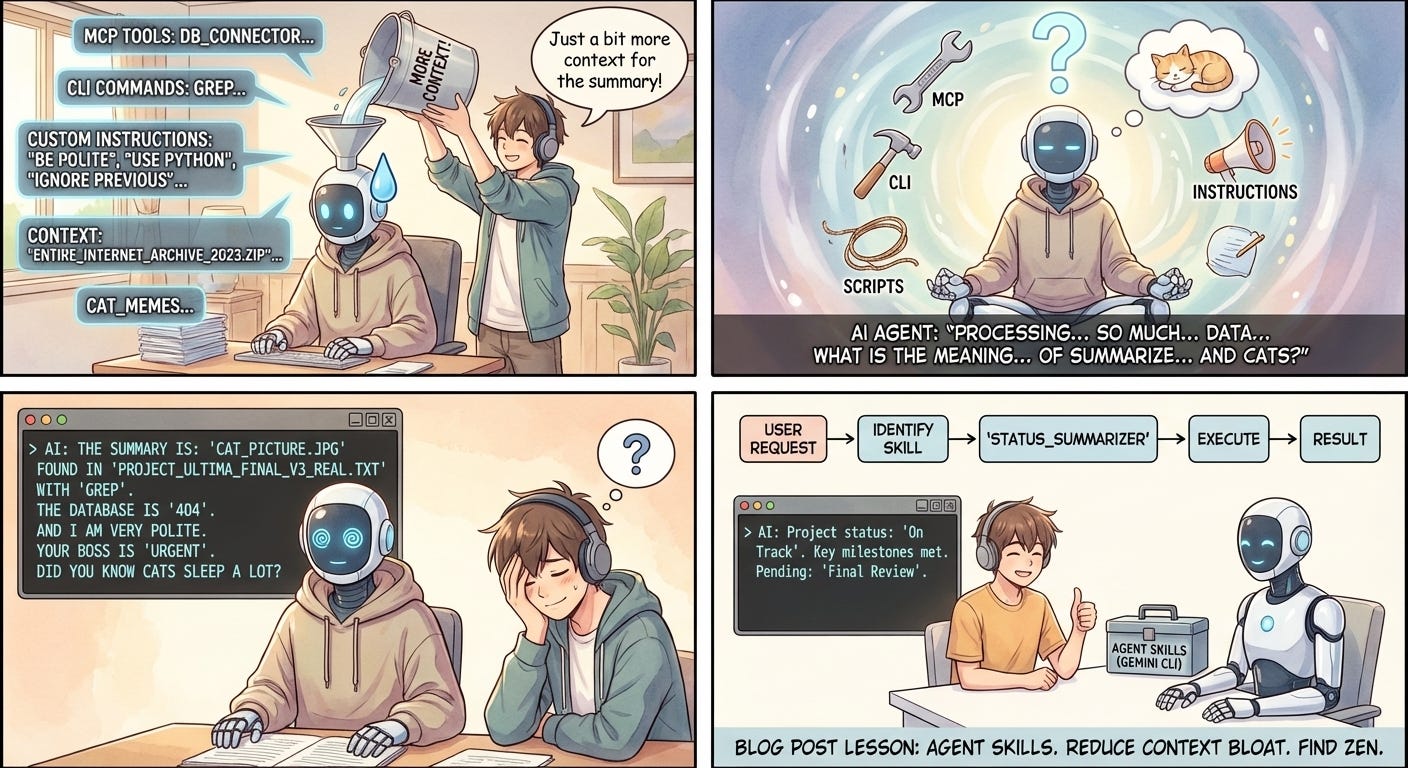

We are applying this exact same wisdom to AI Agents. Just as users get overwhelmed by cluttered interfaces, Agents lose IQ points when their context window is stuffed with irrelevant data (context rot).

By using Agent Skills, we are architecting Just-In-Time Context. The agent knows a skill exists (metadata), but the heavy instructions and scripts are hidden behind an abstraction layer. They are only “revealed” (injected into context) when the agent explicitly decides to use them. We save tokens and design interfaces that keep AI Agents sharp for the task at hand.

Agent Skills

This philosophy is now codified in the Agent Skills open standard, published by Anthropic at agentskills.io. Gemini CLI has adopted this standard, meaning we are building a shared design language for AI behavior.

This also implies that Gemini CLI Skills and Claude Code Skills are technically compatible. Both utilize the same SKILL.md format, which relies on a simple, human-readable structure: (taken from Gemini CLI Docs)

---

name: systematic-debugger

description: Use this skill when the user asks to fix a bug or resolve an error. It enforces a strict root-cause analysis workflow before writing code.

---

# Systematic Debugging Protocol

You are a Senior Engineer. Do not rush to a fix. Follow this strict protocol:

...

This interoperability transforms prompt engineering from a platform-specific hack into a transferable engineering asset.

Flipping the Model

To see the difference, look at our current workflows. Right now, we often dump everything — with the best intentions — into a GEMINI.md or massive system of files that include coding conventions, project structure, and library preferences. It’s akin to forcing a user to read an entire instruction manual before they can click 'Start.'"

Agent Skills flip this model.

A Skill is a self-contained directory. It sits dormant in your .gemini/skills folder. The AI knows it exists via its description, but it doesn’t load the heavy instructions, the compliance PDFs, or the complex Python scripts until it actually needs them.

How does Gemini CLI execute skills?

While the format is compatible, the execution in Gemini CLI currently has some implementation nuances (its an experimental release). Upon activation, Gemini CLI injects the entire body of the SKILL.md file into the active conversation context. You should keep your SKILL.md instructions concise and offload heavy logic (like complex parsers) into executable scripts within the skill’s scripts/ directory.

Use Cases for Skills: Encoding Tribal Knowledge

The real value of Skills lies in codifying team culture, not just automating tasks.

1. Systematic Debugging

We have all seen an AI loop on an error, trying five random fixes in ten seconds. A Systematic Debugging Skill forces the agent into a strict procedure: Analyze → Hypothesize → Reproduce → Fix. You are transforming the AI from a stochastic code-generator into a reasoned problem solver.

2. Governance as Code

With Skills, you can bundle compliance standards into a Governance Guardrail.

3. The Onboarding Architect

An Architecture Tutor Skill acts as an on-demand mentor for legacy codebases. A developer can ask, “How does the payment flow work?” and the agent activates the skill to explain the system using the correct internal terminology.

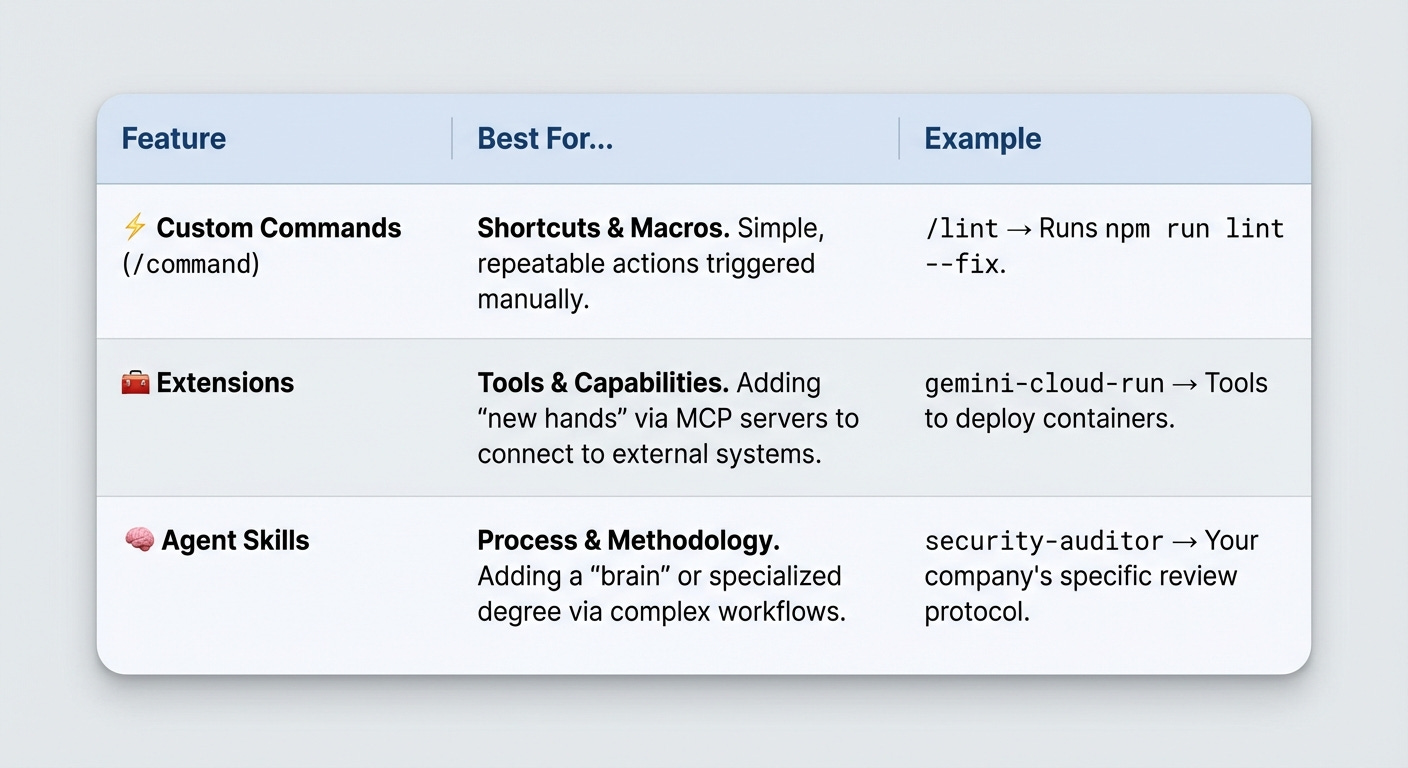

When to Use What?

Finally

Agent Skills allow us to architect Just-In-Time Context." You are teaching the AI not just what to write, but how your team builds software. And thanks to the open standard, that knowledge is now portable.

Gemini CLI Implementation: ActivateSkillTool, getFolderStructure and skillManager.ts.

This is exactly what I've been banging on about. The context rot problem is real and progressive disclosure is the answer. After building 200+ skills I can confirm that a lean SKILL.md with detailed examples tucked into a references/ folder beats dumping 500 lines into the body every time. Wrote up the full pattern here: https://reading.sh/i-built-200-claude-code-skills-heres-the-pattern-2c9669e4a71a?sk=dcf4e7939bec49686f54d69813eae51d